The implication of machine learning for financial solvency prediction: an empirical analysis on public listed companies of Bangladesh | Emerald Insight

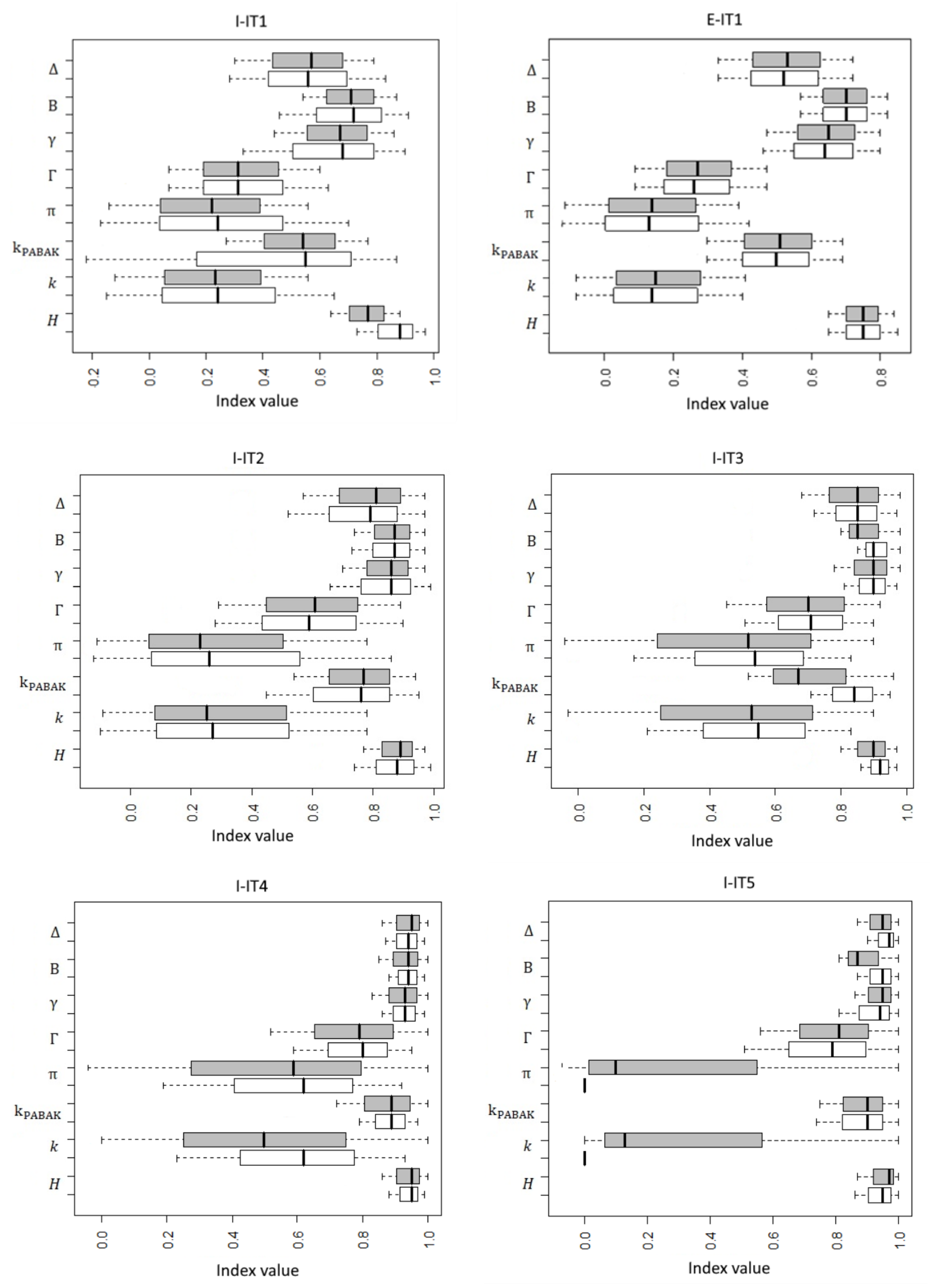

Animals | Free Full-Text | Evaluation of Inter-Observer Reliability of Animal Welfare Indicators: Which Is the Best Index to Use? | HTML

Productivity and impact in advertising research since the millennium: a profiling and investigation of drivers of impact

Powerful Exact Unconditional Tests for Agreement between Two Raters with Binary Endpoints | PLOS ONE

B.1 The R Software. R FUNCTIONS IN SCRIPT FILE agree.coeff2.r If your analysis is limited to two raters, then you may organize y

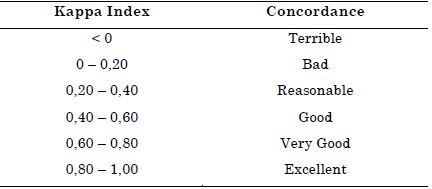

The Measurement of Interrater Agreement". In: Statistical Methods for Rates and Proportions (Third Edition)

An Application of Hierarchical Kappa-type Statistics in the Assessment of Majority Agreement among Multiple Observers

Agricultural expansion and environmental degradation in the Sepotuba river basin - Upper Paraguay River basin, Mato Grosso State - Brazil

Powerful Exact Unconditional Tests for Agreement between Two Raters with Binary Endpoints | PLOS ONE

![PDF] The measurement of observer agreement for categorical data. | Semantic Scholar PDF] The measurement of observer agreement for categorical data. | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/7e7343a5608fff1c68c5259db0c77b9193f1546d/14-Table7-1.png)

![PDF] The measurement of observer agreement for categorical data. | Semantic Scholar PDF] The measurement of observer agreement for categorical data. | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/7e7343a5608fff1c68c5259db0c77b9193f1546d/9-Table3-1.png)